I’m trying to catch up on my massive Pocket back scroll, and in surveying the massive and diverse landscape of its contents, noticed a few pieces all from the same site, eagereyes, and all on the same topic, pie charts.

So, naturally, I read them.

(As a sidebar, am I the only person who struggles with this with Pocket, or other content saving services? Am I coining the term “Pocket Zero” right now? Am I the next Merlin Mann? )

Here are the pieces – they’re all quite short, less than ten minutes reading, even if you do take in the discussion with Hadley Wickham in the comments section:

A Pair of Pie Chart Papers

Ye Olde Pie Chart Debate

Pie Charts

One thing I was surprised to learn was just how long the Great Pie Chart Debate has been going on – over a hundred years! And yet, the pie chart lives on.

It’s also interesting to me that, despite their ubiquity in popular media, we don’t have a great sense of how or why we perceive pie charts the way we do – it makes me consider firing up the Doc’s eye tracker, just to see how eye patterns map onto different visualizations.

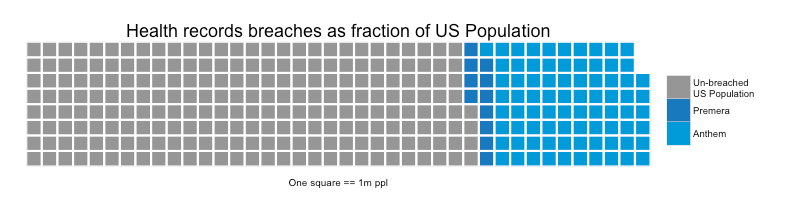

In this series of posts I was also introduced to the Waffle package for R, which makes it easy to put together a pie chart alternative which I quite like – like this:

It strikes me as easier than a pie chart to compare each of the pieces to one another, and indicates that each point is part of a continuous whole in the same sort of way that a pie chart does.

I’m excited to play around with this package some in the coming days. I’ll have to dig a bit and see if it’s supported in Shiny yet!